AT&T has contributed its specifications for a Distributed Disaggregated Chassis (DDC) white box architecture to the Open Compute Project (OCP). The contributed design aims to define a standard set of configurable building blocks to construct service provider-class routers, ranging from single line card systems, a.k.a. “pizza boxes,” to large, disaggregated chassis clusters. AT&T said it plans to apply the design to the provider edge (PE) and core routers that comprise its global IP Common Backbone (CBB).

“The release of our DDC specifications to the OCP takes our white box strategy to the next level,” said Chris Rice, SVP of Network Infrastructure and Cloud at AT&T. “We’re entering an era where 100G simply can’t handle all of the new demands on our network. Designing a class of routers that can operate at 400G is critical to supporting the massive bandwidth demands that will come with 5G and fiber-based broadband services. We’re confident these specifications will set an industry standard for DDC white box architecture that other service providers will adopt and embrace.”

AT&T’s DDC white box design, which is based on Broadcom’s Jericho2 chipset, calls for three key building blocks:

AT&T points out that the line cards and fabric cards are implemented as stand-alone white boxes, each with their own power supplies, fans and controllers, and the backplane connectivity is replaced with external cabling. This approach enables massive horizontal scale-out as the system capacity is no longer limited by the physical dimensions of the chassis or the electrical conductance of the backplane. Cooling is significantly simplified as the components can be physically distributed if required. The strict manufacturing tolerances needed to build the modular chassis and the possibility of bent pins on the backplane are completely avoided.

“We are excited to see AT&T's white box vision and leadership resulting in growing merchant silicon use across their next generation network, while influencing the entire industry,” said Ram Velaga, SVP and GM of Switch Products at Broadcom. “AT&T's work toward the standardization of the Jericho2 based DDC is an important step in the creation of a thriving eco-system for cost effective and highly scalable routers.”

“Our early lab testing of Jericho2 DDC white boxes has been extremely encouraging,” said Michael Satterlee, vice president of Network Infrastructure and Services at AT&T. “We chose the Broadcom Jericho2 chip because it has the deep buffers, route scale, and port density service providers require. The Ramon fabric chip enables the flexible horizontal scale-out of the DDC design. We anticipate extensive applications in our network for this very modular hardware design.”

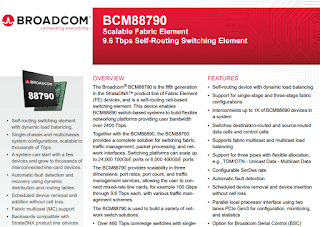

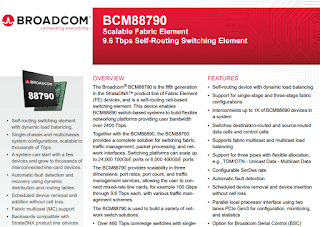

Broadcom announced commercial availability of its Jericho2 and FE9600 chips, the next generation of its StrataDNX family of system-on-chip (SoC) Switch-Routers.

The Jericho2 silicon boasts 10 Terabits per second of Switch-Router performance and is designed for high-density, industry standard 400GbE, 200GbE, and 100GbE interfaces. Key features include the company's "Elastic Pipe" packet processing, along with large-scale buffering with integrated High Bandwidth Memory (HBM).

The new device is shipping within 24 months from its predecessor Jericho+., Jericho2 delivers 5X higher bandwidth at 70% lower power per gigabit.

In addition to Jericho2, Broadcom is shipping FE9600, the new fabric switch device with 192 links of the industry's best performing and longest-reach 50G PAM-4 SerDes. This device offers 9.6 Terabits per second fabric capacity, a delivers 50% reduction in power per gigabit compared to its predecessor FE3600.

“The Jericho franchise is the industry’s most innovative and scalable silicon used today in various Switch-Routers by leading carriers,” said Ram Velaga, Broadcom senior vice president and general manager, Switch Products. “I am thrilled with the 5X increase in performance Jericho2 was able to achieve over a single generation. Jericho2 will accelerate the transition of carrier-grade networks to merchant silicon-based systems with best-in-class cost/performance.”

Arrcus, a start-up that offers a hardware-agnostic network operating system for white boxes switches, announced multiple high-density 100GbE and 400GbE routing solutions for hyperscale cloud, edge, and 5G networks.

The company says its ArcOS software architecture has the foundational attributes to scale-out to an open aggregated routing solution, enabling operators to design, deploy, operationalize, and manage their infrastructure across multiple domains in the network.

"Our mission is to democratize the networking industry by providing best-in-class software, the most flexible consumption model, and the lowest total cost of ownership for our customers; we are now extending this by providing leading-edge open integration solutions for routing. ArcOS is the essential link to fully realize the unparalleled advancements in the 10Tbps Jericho2 SoC family and the resulting systems," Devesh Garg, co-founder and CEO of Arrcus.

The new ArcOS-based platforms, based on Broadcom’s 10Tbps, highly-flexible and programmable StrataDNX Jericho2 switch-router system-on-a-chip (SoC), include:

- 24 ports of 100G + 6 ports of 400G

- 40 ports of 100G

- 80 ports of 100G

- 96 ports of 100G